Introduction

Are your Large Language Model (LLM) outputs consistently 'almost right' but frequently miss the mark on crucial details, impacting your personalization efforts or inventory forecasts? The real culprit might not be your prompt engineering, but something far more fundamental: your file format.

Knowledge workers and marketers, especially within dynamic sectors like fashion retail, increasingly rely on LLMs like ChatGPT, Claude, and Gemini for everything from granular market analysis to crafting compelling content briefs. Yet, the default document format we often feed these powerful tools – the ubiquitous PDF – is a major, yet often overlooked, source of errors, increased operational costs, and frustrating hallucinations.

This post will reveal why understanding how LLMs truly 'read' documents and prioritizing structured input like Markdown can dramatically improve accuracy, reduce operational costs, and unlock the true, transformative potential of AI for your business, driving better personalization and profitability for Mapp Fashion’s clients.

The PDF Problem: Why Your LLM Hates Fixed Layouts

PDFs are a marvel of digital publishing, celebrated for their ability to ensure perfect visual consistency across any device. However, this very strength becomes a critical weakness when it comes to machine readability, especially for LLMs that crave semantic structure.

Visual Fidelity vs. Semantic Integrity

PDFs excel at preserving visual fidelity. They store text as disconnected strings with absolute coordinates on a page, rather than as a coherent, logical flow. This design inherently prioritizes how a human sees the document over how a machine can understand its underlying structure and relationships. This lack of semantic integrity hinders effective AI processing.

The 'Jumbled Text' Catastrophe

When a naive PDF parser attempts to extract text for an LLM, it often fails miserably at distinguishing between headers, footers, sidebars, or complex multi-column layouts. The result? An interleaved, incoherent stream of text that's then fed to the LLM. Imagine trying to derive actionable insights from a trend report where Q1 revenue figures are randomly mixed with sustainability initiatives and a footnote about seasonal textile sourcing. This jumbled input causes the LLM's self-attention mechanism to struggle, leading to breakdowns in reasoning and a significantly heightened risk of hallucinations. This is particularly problematic when analyzing detailed product specifications or customer feedback data crucial for your strategies.

The Hidden Cost of Multimodal PDFs (Gemini Example)

With the advent of multimodal LLMs like Google's Gemini, there's a perceived improvement in handling PDFs, as these models process them more like images to preserve layout. While visually impressive, this approach comes with a hidden cost: significant token overhead. A single, sparse PDF page can consume as many as 560 tokens by default. This is equivalent to hundreds of words of pure text, effectively wasting valuable context budget on empty pixels. For Chief Marketing Officers or Heads of E-commerce uploading extensive brand guidelines, market research reports, or detailed customer persona documents in PDF format, this can prematurely hit context limits, preventing deeper AI analysis and reducing the richness of insights needed for strategic decision-making.

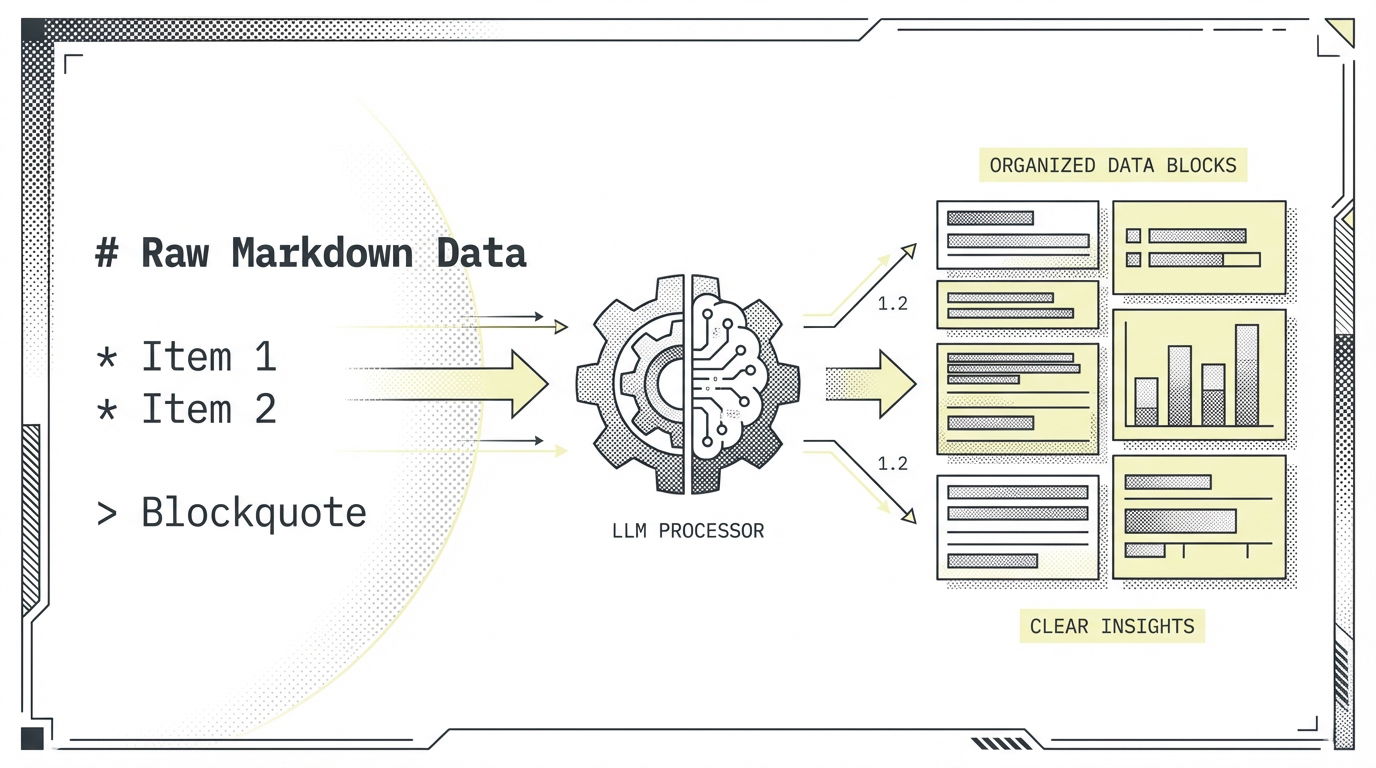

Markdown to the Rescue: Your LLM's Preferred Language

If PDFs are the bane of accurate LLM processing, then Markdown is their best friend. This simple, plain-text formatting language offers native structural advantages that align perfectly with how LLMs are designed to process information, providing a pathway to enhanced AI accuracy for fashion retailers.

Native Structural Advantage

Markdown uses plain-text symbols (like # for headings, - for list items, * for emphasis) to explicitly define hierarchy and semantic structure. Crucially, LLMs are inherently "Markdown Aware" because they've been extensively trained on vast amounts of web data, documentation, and code, much of which uses Markdown. This explicit structure provides unambiguous signals, ensuring the model understands not just what the text says, but also what role each segment plays (e.g., this is a main heading for a product line, this is a sub-point about its features, this is a list of customer benefits).

Token Efficiency & Context Window Expansion

Beyond clarity, Markdown offers significant efficiency. Data shows that Markdown is approximately 16.3% more token-efficient than JSON for the same dataset. For an LLM with a 128,000-token context window, this translates to saving nearly 20,000 tokens – roughly 15,000 words of additional content! For fashion retail marketers and data teams, this means fitting more comprehensive research, detailed competitor analysis, or extensive customer feedback within a single prompt. This allows for deeper reasoning, more holistic analysis of trends and opportunities, and ultimately, more accurate insights to drive personalized customer journeys and optimized inventory.

Hallucination Guardrails

The explicit formatting inherent in Markdown minimizes ambiguity. By providing clear contextual anchors (e.g., "this is a subheading for 'Spring Collection Features'", "this is a list of 'Key Demographic Insights'"), Markdown acts as effective 'guardrails' for the model's reasoning. This leads to a dramatic improvement in task completion rates, with some studies showing up to a 58% increase due to the enhanced structural clarity. This directly translates to more reliable and actionable insights for Mapp Fashion's clients, improving everything from marketing campaign effectiveness to financial forecasting.

Actionable Tips for Marketers & Knowledge Workers

Ready to supercharge your LLM interactions and unlock peak AI accuracy? Here are practical steps to adopt a Markdown-first approach within your fashion retail operations:

Audit Your Input Quality & Clean with LLMs

Before feeding any critical document to an LLM, it's vital to audit the quality of the raw text extraction. Use an LLM's 'Code Interpreter' feature (available in tools like Custom GPTs, Gemini Advanced, or Claude) to review how the model "sees" the document. This simple step can reveal shocking inconsistencies and parsing errors you never knew existed.

Even better, leverage the LLM itself to clean your files. You can provide a prompt similar to this:

Convert the attached file to a Markdown (.md) version to make the text easily readable by machines as well as humans. Remove unnecessary line breaks, but also add necessary line breaks.

Do not change the actual text or interpret it. Maintain integrity of the actual body.

Add the necessary Markdown syntax:

# Header

## Subheader

* Bullet Point

For every table:

Provide a structured text version where each row is rewritten as complete sentences. Each rewritten row must stand alone and carry the same meaning as the original table.

This prompt guides the LLM to perform a structural cleanup, making the output far more digestible and accurate for subsequent analysis.

Standardize on Markdown

For all new content creation – whether it’s personal notes, Standard Operating Procedures (SOPs), content briefs, internal documentation, or new product descriptions – make Markdown your primary drafting format. Tools like Notion, Obsidian, VS Code, and even some online editors support native Markdown editing, making adoption seamless for your teams.

Embrace the Hybrid Strategy for Complex Documents

Existing PDFs and highly formatted DOCX files aren't lost causes. For these legacy or visually rich documents, employ a hybrid strategy:

- PDF to DOCX First: Convert PDFs to DOCX files. This crucial intermediate step allows you to manually verify the layout and correct major parsing errors that a direct PDF-to-Markdown conversion might miss. It's your chance to ensure the human-readable structure is accurately preserved.

- DOCX to Markdown: Once cleaned and verified in DOCX, export the document to Markdown. This ensures structural clarity and optimal readability for LLM consumption, maximizing the value you extract from these documents.

Master the 'Narrative Table' (Fact-per-Row) Strategy

For high-stakes tabular data – like ICP personas, competitor analysis matrices, feature comparisons, or detailed inventory reports – avoid directly pasting tables. LLMs can struggle with the implicit relationships within table cells. Instead, convert each row into a descriptive, explicit sentence. For example, instead of a table with columns "Persona," "Pain Point," "Solution," write: "Persona X (e.g., 'Gen Z Trendsetter') experiences Pain Point Y (e.g., 'struggles to find unique, sustainable fashion'). Our solution addresses this by offering Z (e.g., 'AI-curated ethical collections')." This 'narrative table' approach eliminates ambiguity, creates robust Retrieval-Augmented Generation (RAG) chunks, and ensures context is perfectly preserved for the LLM, leading to more precise AI-driven insights.

Conclusion

Moving beyond the visual comfort of PDFs to the structural clarity of inputs like Markdown isn't just a technical tweak; it's a fundamental shift in how we interact with AI. This change directly impacts the accuracy, efficiency, and overall reliability of your LLM outputs, translating into better business decisions, improved profitability, reduced return rates, and enhanced customer experiences for fashion retailers.

While Visual Language Model (VLM) pipelines are continually improving, the clarity, structural integrity, and cost-effectiveness of Markdown remain unmatched for text-heavy analytical tasks. It’s a strategic investment in getting the most out of your AI and ensuring your data-driven marketing strategies truly resonate.

Start experimenting with Markdown for your LLM inputs today. Leverage the power of your LLM to clean your documents and witness the transformation in output quality.